Agent-First Testing: Build Quality Into Every AI Coding Session

Shiplight AI Team

Updated on April 11, 2026

Shiplight AI Team

Updated on April 11, 2026

> Agent-first testing is a software quality approach where the AI coding agent that writes code is also responsible for verifying it — opening a real browser, confirming the UI works, and generating a test file — as part of every development session.

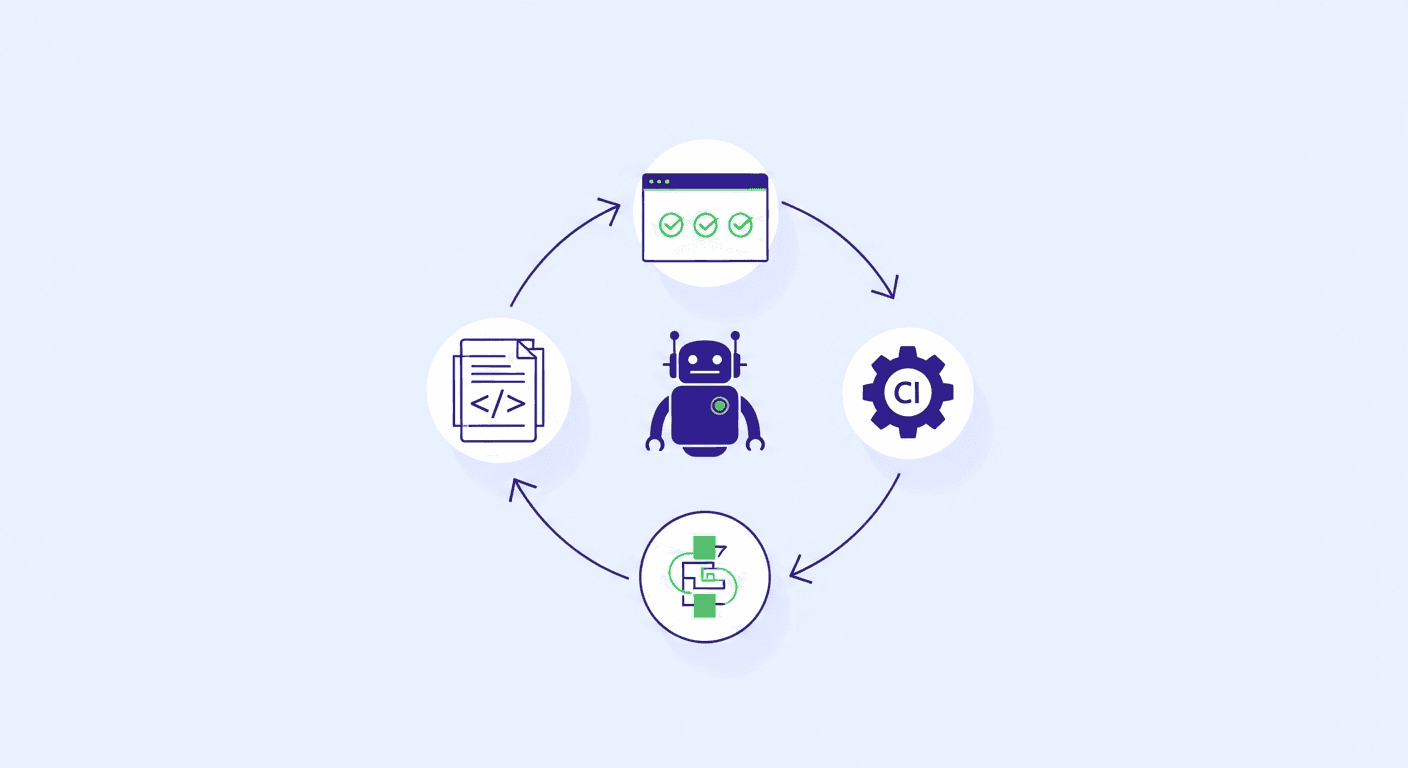

Agent-first testing embeds automated verification directly into the AI coding agent's workflow — not added afterward. The agent writes code, opens a real browser, verifies the change works, and saves the verification as a test. All in one loop, without leaving the development session.

This is a direct response to a structural problem in agent-first development: AI coding agents ship code faster than traditional QA cycles can absorb. When an agent can implement a feature in minutes, a testing workflow that requires hours of separate work is no longer compatible with the development velocity.

---

Traditional QA assumes a handoff. A developer finishes a feature, opens a PR, a reviewer checks the diff, QA runs tests. The gap between "code written" and "code verified" is measured in hours or days.

AI coding agents collapse the "code written" side to minutes. The handoff gap doesn't shrink — it becomes the dominant bottleneck. OpenAI has named this directly: as agents write more code, human QA becomes the constraint on shipping velocity.

The human QA bottleneck in agent-first teams manifests in three ways:

Agent-first testing closes all three gaps by making the agent itself responsible for verification.

In an agent-first testing workflow, the coding agent doesn't just write code — it completes a full verification loop:

VERIFY statements to confirm expected stateThe key difference from traditional E2E testing: the test is created during development, not after. The agent that knows why the code was written also writes the test that proves it works.

goal: Verify new checkout discount field after agent implementation

base_url: http://localhost:3000

statements:

- navigate: /cart

- intent: Add item to cart

action: click

- navigate: /checkout

- intent: Enter discount code

action: fill

value: "SAVE20"

- intent: Apply discount

action: click

- VERIFY: Order total shows 20% discount applied

- intent: Complete checkout with test card

action: fill

value: "4242424242424242"

- VERIFY: Order confirmation page displays with order numberThis test is readable by any engineer, lives in the git repo, appears in PR diffs, and self-heals when the UI changes. The agent that implemented the discount field also wrote this test in the same session.

MCP (Model Context Protocol) is the technical foundation that makes agent-first testing practical. It lets AI coding agents call external tools — including browser automation — without leaving the coding workflow.

With the Shiplight Plugin installed, an agent in Claude Code, Cursor, or Codex can:

.test.yaml in the repo# Install in Claude Code

claude mcp add shiplight -- npx -y @shiplightai/mcp@latest

# Install in Cursor (add to .cursor/mcp.json)

{

"mcpServers": {

"shiplight": {

"command": "npx",

"args": ["-y", "@shiplightai/mcp@latest"]

}

}

}The agent can now verify its own work in a real browser as part of every development session — not as a separate step, but as a natural continuation of the coding workflow.

A complete agent-first testing setup has four layers:

The agent verifies changes in a real browser during development. This catches bugs before the PR is even opened — when fixing them is cheapest.

A fast smoke suite (under 5 minutes) runs on every PR against staging. Covers the 5–10 most critical user flows. Blocks merges when flows break.

The complete test suite runs on merge to main. Catches regressions across the full product surface. Slower is acceptable here — it's not blocking PR review.

Tests use intent-based locators that self-heal when the UI changes. Agent-generated code changes the UI constantly — without self-healing, tests break faster than agents can fix them.

This four-layer stack is the implementation of a two-speed E2E testing strategy: fast local verification during development, reliable regression coverage in CI.

| Traditional QA | Agent-First Testing | |

|---|---|---|

| When tests are written | After code ships | During development |

| Who writes tests | QA engineers | The coding agent |

| Verification timing | Hours to days after PR | Before PR is opened |

| Test format | Playwright/Selenium scripts | YAML (human-readable) |

| Maintenance | Manual selector updates | AI self-healing |

| Velocity impact | Slows release cadence | Scales with agent speed |

| Context | QA interprets requirements | Agent knows the intent |

The fundamental shift: quality moves from a gate at the end of the pipeline to a property of the development loop itself.

If you're using Claude Code, Cursor, or Codex, you can add agent-first testing to your workflow today:

Step 1: Install the Shiplight Plugin (free, no account required):

claude mcp add shiplight -- npx -y @shiplightai/mcp@latestStep 2: On your next code change, ask the agent to verify it: > "Verify that the change you just made works correctly in a real browser and save it as a test."

Step 3: Review the generated .test.yaml file in the PR diff. Confirm it covers the right behavior.

Step 4: Add the test to your CI smoke suite. It will run automatically on every future PR.

Step 5: Expand coverage incrementally — one test per meaningful feature change.

Within a week, you'll have a growing suite of tests that were generated during development, cover real user flows, and require near-zero maintenance. See QA for the AI coding era for how this fits into a broader quality strategy for fast-moving teams.

Agent-first testing is a QA approach where the AI coding agent is responsible for verifying its own output — opening a real browser, confirming the UI works, and generating a test file — as part of every development session. It contrasts with traditional QA, where testing is a separate step performed by a separate team after code is written.

Agentic QA testing uses AI agents to automate the QA process — generating, running, and maintaining tests. Agent-first testing specifically integrates verification into the coding agent's workflow, so the same agent that writes code also proves it works. Agentic QA can operate independently of the development workflow; agent-first testing is embedded within it.

Not entirely. Agent-first testing automates regression verification and in-session smoke testing. Manual exploratory testing — finding unexpected bugs through creative investigation — still adds value. The best teams use agent-first testing to eliminate repetitive manual regression and free QA engineers for higher-value exploratory work.

Shiplight Plugin works with Claude Code, Cursor, and Codex via MCP. Any coding agent that supports MCP servers can integrate agent-first testing into its workflow.

Agent-first tests written with intent-based YAML self-heal automatically. When a button is renamed or a component is refactored, the AI resolves the correct element from the live DOM using the step's intent description rather than a stale CSS selector. Tests survive the constant UI changes that agent-generated code produces.

---

Related: agent-first development · what is agentic QA testing · YAML-based testing · intent-cache-heal pattern · two-speed E2E strategy

Get started with Shiplight Plugin — free, no account required. Add agent-first testing to your Claude Code, Cursor, or Codex workflow in one command.